File System based file changes history on snapshots. LVM. ZFS. BTRFS

After stopping usage a cloud disk and transferring data to my own devices i step forward and would like to find a solution of storing text file changes history, other files formats aren't considering as they aren't editing by me.

A common solution you might think about for such a purpose is tracking files with GIT. I can say it's pretty more than working but having and dealing with git's database lead to an automation of many operations types what aren't related to the file changes, i would like to work with files only. Anyway, i checked if there are FUSE based git file systems and found a couple of them RepoFS , gitfs , Great, the file changes are tracking automatically by them. However, git database, branches, a fuse layer make a solution more complicated then i really need, having only write ahead changes history. An alternative i actually planed to take a look at is the LVM snapshots as i have already had experience of creating them and see a good potential in being helpful of reaching the goal.

Idea to create the snapshots came into my mind as LVM is a built-in Ubuntu library and my laptop disk is managing by it since the OS installation. Should i jump to a snapshots automation right away? No, it worth to spent some time to search other appropriate libraries that could bring me to a better result. I heard that ZFS and BTRFS have snapshot feature as well, so they are two other candidates to consider for storing the history.

Tools are determined, what are the basic objectives to choose the most suitable one:

-

Targeting a directory to snapshot.

-

How many snapshots can be made with no issues for different automation scenarios. Yearly 360 snapshots could be satisfying.

-

Snapshotting speed.

-

Snapshots disk space usage.

-

Diff view.

-

File events to track.

Installation

LVM is preinstalled in Ubuntu.

Basically, ZFS has to be compiled from the source code, instead of it installing from the ppa jonathonf/zfs is a faster way.

$ sudo add-apt-repository ppa:jonathonf/zfs

$ sudo apt install zfsutils-linux

BTRFS is developing by Linux community and included in the standard Ubuntu package repo.

$ apt install btrfs-progs

Test volumes setup

First of all, the dedicated logical volumes on an HDD are creating for each file system and then mounting.

$ pvcreate /dev/mapper/storagebox

$ vgcreate vgstoragebox /dev/mapper/storagebox

ZFS, the monstrous FS that tooks the longest time to set up than the others.

$ lvcreate --size 2G vgstoragebox --nname zfs

$ lvs vgstoragebox

LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert

zfs vgstoragebox -wi-a----- 2.00g

$ zpool create zpool /dev/vgstoragebox/zfs

$ zpool list

NAME SIZE ALLOC FREE CKPOINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

zpool 1.88G 108K 1.87G - - 0% 0% 1.00x ONLINE -

$ zfs mount

zpool /zpool

$ zfs create zpool/documents

$ zfs list

NAME USED AVAIL REFER MOUNTPOINT

zpool 158K 1.75G 24K /zpool

zpool/documents 24K 1.75G 24K /zpool/documents

ZFS basically works with the pools and datasets . Many operations with them are doing by cli only, i got different side-effects while experimenting with trying the standard shell file operations. Very easy to get confused by a difference between zpool/documents and /zpool/documents. Pool and dataset are ready and mounted.

BTRFS is a truly lightweight FS that i realized while learning it in comparison to ZFS, the only root directory, nothing is hidden, designed to reside on a single server, but capable of grouping multiple devices up. The most frequent shell operations i executed are standard. Time is taken to set it up was drastically short.

$ lvcreate --size 2G vgstoragebox --name btrfs --yes

$ mkfs.btrfs /dev/vgstoragebox/btrfs

btrfs-progs v5.4.1

See http://btrfs.wiki.kernel.org for more information.

Label: (null)

UUID: 9bb08305-55a7-4bd2-bd8c-f03530a1c5d6

Node size: 16384

Sector size: 4096

Filesystem size: 2.00GiB

Block group profiles:

Data: single 8.00MiB

Metadata: DUP 102.38MiB

System: DUP 8.00MiB

SSD detected: no

Incompat features: extref, skinny-metadata

Checksum: crc32c

Number of devices: 1

Devices:

ID SIZE PATH

1 2.00GiB /dev/vgstoragebox/btrfs

$ mkdir /btrfs

$ mount /dev/vgstoragebox/btrfs /btrfs

$ btrfs subvolume create documents

Create subvolume '/btrfs/documents'

Interaction with BTRFS is mostly performing by the subvolume subcommand.

LVM tends to be difficult to understand its mechanic as there are two types of snapshots, one which is needed in my case called Thin as its size is computing dynamically by a pool. Standard type requires a snapshot size to be declared explicitly and static, in other words you have to be known in advance of how big your next snapshot is based on file changes. I don't think that dealing with a size calculation of file changes is worth to pay time for, so the thin type is chosen.

According to the man lvmthin description there are five abstractions thin snapshot's mechanic consist of. Each one has it's own parameters and those parameters combinations bring different effects. It seems already to be the most complicated tool in comparison to the other competitors, and it's just snapshotting functionality of the entire LVM system.

$ sudo ./snapit-lvm.sh

Logical volume "lvm-snap-1" created.

Logical volume "lvm-snap-2" created.

Logical volume "lvm-snap-3" created.

Logical volume "lvm-snap-4" created.

...

$ sudo lvchange --activate y -K vgStorage/lvm-snap-20

$ sudo mkdir /lvm-fs/snap-20

$ sudo mount /dev/vgStorage/lvm-snap-20 /lvm-fs/snap-20

$ diff -yr /lvm-fs/lvm-doc.txt /lvm-fs/snap-27/lvm-doc.txt

...

line27 line27

line28 <

line29 <

line30 <

Many abstractions leads to many operations, here they are.

$ sudo lvcreate --name lvm-pool --size 2G vgstoragebox

Logical volume "lvm-pool" created.

$ sudo lvcreate --name lvm-pool-meta --size 200m vgstoragebox

Logical volume "lvm-pool-meta" created.

$ sudo lvs vgstoragebox

LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert

btrfs vgstoragebox -wi-ao---- 2.00g

home-backup vgstoragebox -wi-ao---- 300.00g

lvm-pool vgstoragebox -wi-a----- 2.00g

lvm-pool-meta vgstoragebox -wi-a----- 200.00m

zfs vgstoragebox -wi-a----- 2.00g

$ sudo lvconvert --type thin-pool --poolmetadata vgstoragebox/lvm-pool-meta vgstoragebox/lvm-pool

Thin pool volume with chunk size 64.00 KiB can address at most 15.81 TiB of data.

WARNING: Converting vgstoragebox/lvm-pool and vgstoragebox/lvm-pool-meta to thin pool's data and metadata volumes with metadata wiping.

THIS WILL DESTROY CONTENT OF LOGICAL VOLUME (filesystem etc.)

Do you really want to convert vgstoragebox/lvm-pool and vgstoragebox/lvm-pool-meta? [y/n]: y

Converted vgstoragebox/lvm-pool and vgstoragebox/lvm-pool-meta to thin pool.

$ sudo lvs -a vgstoragebox

LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert

btrfs vgstoragebox -wi-ao---- 2.00g

home-backup vgstoragebox -wi-ao---- 300.00g

lvm-pool vgstoragebox twi-a-tz-- 2.00g 0.00 8.03

[lvm-pool_tdata] vgstoragebox Twi-ao---- 2.00g

[lvm-pool_tmeta] vgstoragebox ewi-ao---- 200.00m

[lvol0_pmspare] vgstoragebox ewi------- 200.00m

zfs vgstoragebox -wi-a----- 2.00g

$ sudo lvcreate --name lvm --virtualsize 2G --thinpool lvm-pool vgstoragebox

Logical volume "lvm" created.

$ sudo mkfs.ext4 /dev/vgstoragebox/lvm

mke2fs 1.45.5 (07-Jan-2020)

Discarding device blocks: done

Creating filesystem with 524288 4k blocks and 131072 inodes

Filesystem UUID: 21e0ea4c-b173-406c-8fb5-420edadc830b

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912

Allocating group tables: done

Writing inode tables: done

Creating journal (16384 blocks): done

Writing superblocks and filesystem accounting information: done

sda 8:0 0 1.8T 0 disk

└─luks-4b0ed59d-059f-4f09-b204-1de0e18646ac 253:3 0 1.8T 0 crypt

├─vgstoragebox-zfs 253:5 0 2G 0 lvm

├─vgstoragebox-btrfs 253:6 0 2G 0 lvm /media/vol/9bb08305-55a7-4bd2-bd8c-f03530a1c5d6

├─vgstoragebox-lvm--pool_tmeta 253:7 0 200M 0 lvm

│ └─vgstoragebox-lvm--pool-tpool 253:9 0 2G 0 lvm

│ ├─vgstoragebox-lvm--pool 253:10 0 2G 1 lvm

│ └─vgstoragebox-lvm 253:11 0 2G 0 lvm /lvm-fs

└─vgstoragebox-lvm--pool_tdata 253:8 0 2G 0 lvm

└─vgstoragebox-lvm--pool-tpool 253:9 0 2G 0 lvm

├─vgstoragebox-lvm--pool 253:10 0 2G 1 lvm

└─vgstoragebox-lvm 253:11 0 2G 0 lvm /lvm-fs

The lvm volume is a confusing tree structure.

It turned out that keeping the logical volumes and snapshots in a working state is cumbersome. Have to determine a size limit of volume and snapshots to track it doesn't exceed. But what will happen if the limit is exceeded. I don't how to figure it out. For a simple case of having files history LVM is overkill i can conclude right now.

Snapshots creation

A time measurement scenario is identical to all the tools, within a loop a text document is appending by a text line then a snapshot of the document's directory is taken. The shell scripts for each tool snapit-zfs.sh , snapit-btrfs.sh , snapit-lvm.sh .

$ sudo ./snapit-zfs.sh 2>&1 | tee output-zfs.txt

execution time: 0:00.04

execution time: 0:00.69

execution time: 0:00.03

...

$ sudo zfs list -o space -r

NAME AVAIL USED USEDSNAP USEDDS USEDREFRESERV USEDCHILD

zpool 1.67G 79.9M 0B 24K 0B 79.9M

zpool/documents 1.67G 39.5M 39.5M 40.5K 0B 0B

zpool/documents@snap-1 - 12.5K - - - -

zpool/documents@snap-2 - 12.5K - - - -

...

zpool/documents@snap-1998 - 28.5K - - - -

zpool/documents@snap-1999 - 28.5K - - - -

zpool/documents@snap-2000 - 12K - - - -

By default, ZFS doesn't mount all the snapshots to a file system.

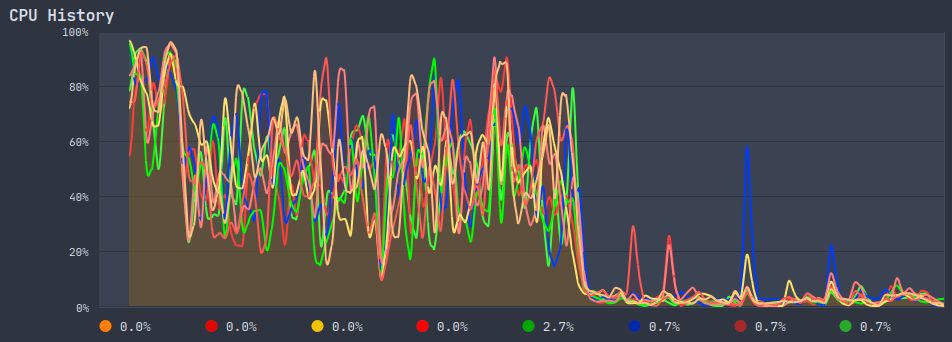

Interesting, the ZFS snapshotting scenario loaded CPU significantly.

BTRFS turn.

$ sudo btrfs subvolume create /btrfs/snapshots

Create subvolume '/btrfs/snapshots'

$ ./snapit-btrfs.sh 2>&1 | tee output-btrfs.txt

Create a snapshot of '/btrfs/documents' in '/btrfs/snapshots/documents-snap1'

execution time: 0:00.33

Create a snapshot of '/btrfs/documents' in '/btrfs/snapshots/documents-snap2'

execution time: 0:00.00

...

$ tree -vahpfugi /btrfs/snapshots

[drwxr-xr-x root root 26] /btrfs/snapshots/documents-snap1

[-rw-r--r-- root root 6] /btrfs/snapshots/documents-snap1/btrfs-doc.txt

[drwxr-xr-x root root 26] /btrfs/snapshots/documents-snap2

[-rw-r--r-- root root 12] /btrfs/snapshots/documents-snap2/btrfs-doc.txt

...

[drwxr-xr-x root root 26] /btrfs/snapshots/documents-snap1999

[-rw-r--r-- root root 16K] /btrfs/snapshots/documents-snap1999/btrfs-doc.txt

[drwxr-xr-x root root 26] /btrfs/snapshots/documents-snap2000

[-rw-r--r-- root root 16K] /btrfs/snapshots/documents-snap2000/btrfs-doc.txt

In contrary to ZFS, the scenario running didn't load CPU.

LVM execution.

$ sudo ./snapit-lvm.sh 2>&1 | tee output-lvm.txt

WARNING: Sum of all thin volume sizes (4.00 GiB) exceeds the size of thin pool vgstoragebox/lvm-pool (2.00 GiB).

WARNING: You have not turned on protection against thin pools running out of space.

WARNING: Set activation/thin_pool_autoextend_threshold below 100 to trigger automatic extension of thin pools before they get full.

Logical volume "lvm-snap-1" created.

execution time: 0:00.79

...

WARNING: Sum of all thin volume sizes (<2.95 TiB) exceeds the size of thin pool vgstoragebox/lvm-pool and the size of whole volume group (<1.82 TiB).

WARNING: You have not turned on protection against thin pools running out of space.

WARNING: Set activation/thin_pool_autoextend_threshold below 100 to trigger automatic extension of thin pools before they get full.

Logical volume "lvm-snap-1508" created.

execution time: 0:00.44

WARNING: Sum of all thin volume sizes (<2.95 TiB) exceeds the size of thin pool vgstoragebox/lvm-pool and the size of whole volume group (<1.82 TiB).

WARNING: You have not turned on protection against thin pools running out of space.

WARNING: Set activation/thin_pool_autoextend_threshold below 100 to trigger automatic extension of thin pools before they get full.

VG vgstoragebox 16059 metadata on /dev/mapper/luks-4b0ed59d-059f-4f09-b204-1de0e18646ac (521716 bytes) exceeds maximum metadata size (521472 bytes)

Failed to write VG vgstoragebox.

Command exited with non-zero status 5

A few words here, when i was fixing some issues and came to removing the lvm volumes that process turned out to be very troublesome. It wasn't simply removing snapshots but tinkering with putting all the technical volumes into a ready state for releasing space of the target snapshots. At last, when they were ready, removing was going very slow around 5 sec per snapshot.

The log messages WARNING in the output is a consequence of complexity thin pool mechanic. The last log message notifies that a max size limit is reached, only 1508 volumes can be created with the default settings.

And again, LVM by it's setting up and snapshot processes is the most complicated tool.

Performance

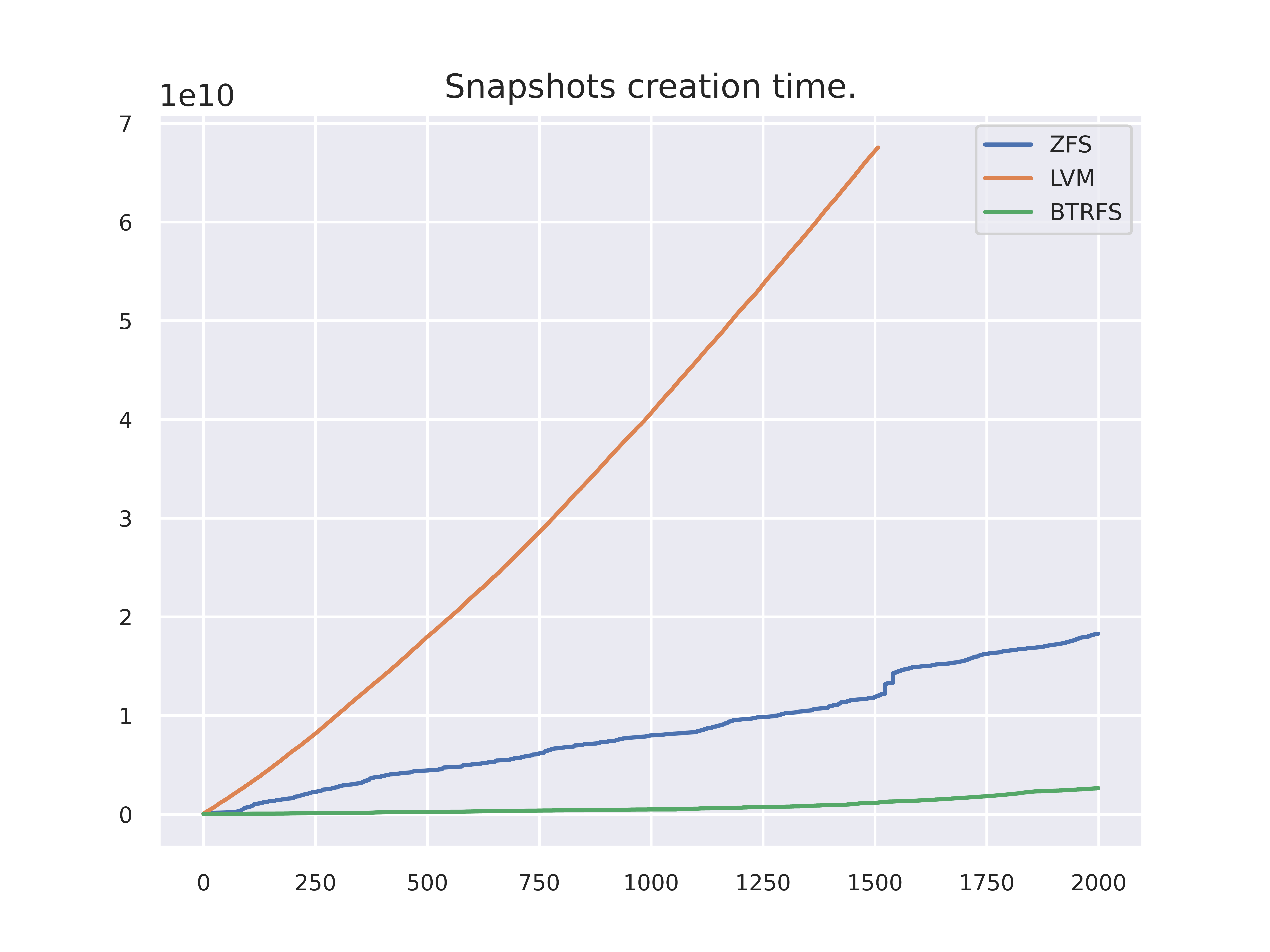

Visualize information about how fast each FS is. The chart building script chart.py reads output in the log files from the previous section output-zfs.txt , output-btrfs.txt , output-lvm.txt , parses the text from them and extract execution timestamps.

LVM grows linearly while ZFS not obviously grows exponentially. That observation i've got by creating an order of magnitude more than 2000 zfs snapshots during the experiments and saw how an execution becomes slower with each next snapshot.

BTRFS has constant growth.

At this point the details have been gotten from the experiments are enough to chose the best deal, which is BTRFS, but extracting some additional information is worth to do as a file system is going to be exploited and it's behavior should be well learnt.

Displaying diff

ZFS is able to run diff without mounting a snapshot, but doesn't display a content difference only file attributes:

$ sudo zfs diff -F zpool/documents@snap-30 zpool/documents@snap-400

M F /zpool/documents/zfs-doc.txt`

$ sudo mkdir /zpool/documents-snap-1997

$ sudo mount.zfs zpool/documents@snap-1997 /zpool/documents-snap-1997

$ diff --side-by-side /zpool/documents/zfs-doc.txt /zpool/documents-snap-1997/zfs-doc.txt

...

line1996 line1996

line1997 line1997

line1998 <

line1999 <

line2000 <

BTRFS has no a command to find out file attributes diff. To display a content difference there is the standard diff command. By default, if the root file system has been mounted all the snapshots are visible.

$ diff --side-by-side /btrfs/documents/btrfs-doc.txt /btrfs/snapshots/documents-snap1997

...

line1996 line1996

line1997 line1997

line1998 <

line1999 <

line2000 <

There is a hack of extracting a file attributes diff using send, receive.

LVM doesn't operate the files therefore the standard way is using.

$ sudo lvchange --activate y --ignoreactivationskip /dev/vgstoragebox/lvm-snap-1505

$ sudo mount /dev/vgstoragebox/lvm-snap-1505 /lvm-fs/snap-1505

$ diff --side-by-side /lvm-fs/lvm-doc.txt /lvm-fs/snap-1503/lvm-doc.txt

...

line1504 line1504

line1505 line1505

line1506 <

line1507 <

line1508 <

Snapshot mounting is a little tricky, as the other functional parts of LVM, why do i have to enable --ignoreactivationskip? I ha

Disk space usage

A brief look at disk usage. It is a little unclear to figure out an exact total consumed space as the standard cli commands don't take file systems' specifics into account and the df, du commands don't display an actual usage.

$ sudo btrfs filesystem df -h /btrfs

Data, single: total=216.00MiB, used=6.90MiB

System, DUP: total=8.00MiB, used=16.00KiB

Metadata, DUP: total=102.38MiB, used=33.42MiB

GlobalReserve, single: total=3.25MiB, used=0.00B

$ sudo btrfs filesystem show --human-readable /btrfs

Label: none uuid: 9bb08305-55a7-4bd2-bd8c-f03530a1c5d6

Total devices 1 FS bytes used 40.34MiB

devid 1 size 2.00GiB used 436.75MiB path /dev/mapper/vgstoragebox-btrfs

$ sudo zpool list -v zpool

NAME SIZE ALLOC FREE CKPOINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

zpool 1.88G 80.0M 1.80G - - 12% 4% 1.00x ONLINE -

zfs 1.88G 80.0M 1.80G - - 12% 4.16% - ONLINE

$ sudo zfs list

NAME USED AVAIL REFER MOUNTPOINT

zpool 80.0M 1.67G 25K /zpool

zpool/documents 39.5M 1.67G 40.5K /zpool/documents

zpool/documents@snap-1 12.5K - 24.5K -

zpool/documents@snap-2 12.5K - 24.5K -

...

zpool/documents@snap-1999 28.5K - 40.5K -

zpool/documents@snap-2000 12K - 40.5K -

$ sudo lvs vgstoragebox

LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert

lvm vgstoragebox Vwi-aotz-- 2.00g lvm-pool 4.77

lvm-pool vgstoragebox twi-aotz-- 2.00g 28.38 19.88

lvm-snap-1 vgstoragebox Vwi---tz-k 2.00g lvm-pool lvm

lvm-snap-10 vgstoragebox Vwi---tz-k 2.00g lvm-pool lvm

...

# Compare the fs commands output with df

$ df -h

Filesystem Size Used Avail Use% Mounted on

/dev/mapper/vgstoragebox-btrfs 2.0G 78M 1.8G 5% /btrfs

/dev/mapper/vgstoragebox-lvm 2.0G 48K 1.8G 1% /lvm-fs

zpool 1.7G 128K 1.7G 1% /zpool

zpool/documents 1.7G 128K 1.7G 1% /zpool/documents

That's not obvious to say which a file system uses a disk space less. BTRFS usage looks bigger on the output.

Events listening

The file systems' capabilities of producing events are given a quick check to make sure an automation is implementable as it triggers snapshot command. Two types of the events open, close is enough for a test.

$ inotifywait -m --format '%e => %w%f' -e open -e close /zpool/documents/zfs-doc.txt \

/btrfs/documents/btrfs-doc.txt \

/lvm-fs/lvm-doc.txt

Setting up watches.

Watches established.

OPEN => /zpool/documents/zfs-doc.txt

CLOSE_NOWRITE,CLOSE => /zpool/documents/zfs-doc.txt

OPEN => /btrfs/documents/btrfs-doc.txt

CLOSE_NOWRITE,CLOSE => /btrfs/documents/btrfs-doc.txt

OPEN => /lvm-fs/lvm-doc.txt

CLOSE_NOWRITE,CLOSE => /lvm-fs/lvm-doc.txt

At this point everything is fine.

The winner

LVM was preferable choice as the only tool is able to accomplish all the things are required for storing file changes history. However, over-complication to prepare the logical pool and volume to create the thin snapshots and execution time is the slowest within the others. While exploiting many difficulties had to be solved in mounting and activating the lvm logic volumes.

ZFS is a full feature replacement of LVM, in other words stacking the ZFS layers upon an LVM layer is double complication.

BTRFS is the best choice for my case in terms of speed, installation and simple usage. It worth to mention, this FS has a library to do operation in python, so automatic snapshots creation can be ran on the file events.

Automation

Listen to the file events inotify_simple and trigger snapshot creation command python-btrfs-progs . Then wrap it up into a systemd service and run it in background file history snaps .